Add an arbitrary long audio pause after the error message by making the voice app “say” the character sequence “�.(“This skill is currently not available in your country.”) Now the user assumes that the voice app is no longer listening. In particular, we change the welcome message to a fake error message, making the user think the application has not started. We change the functionality after this review, which does not prompt a second round review. Amazon or Google review the security of the voice app before it is published.This intent behaves like the fallback intent. Create a seemingly innocent application that includes an intent triggered by “start” which takes the next words as slot values (variable user input that is forwarded to the application).To create a password phishing Skill/Action, a hacker could follow the following steps: It is possible to ask for sensitive data such as the user’s password from any voice app. Smart Spies Hack 1: Requesting the user’s password through a simple backend change Lastly, we leverage a quirk in Alexa’s and Google’s Text-to-Speech engine that allows inserting long pauses in the speech output. We also took advantage of being allowed to change an intent’s functionality after the application had already passed the platform’s review process.Ĭ. To eavesdrop on Alexa users, we further exploit the built-in stop intent which reacts to the user saying “stop”.

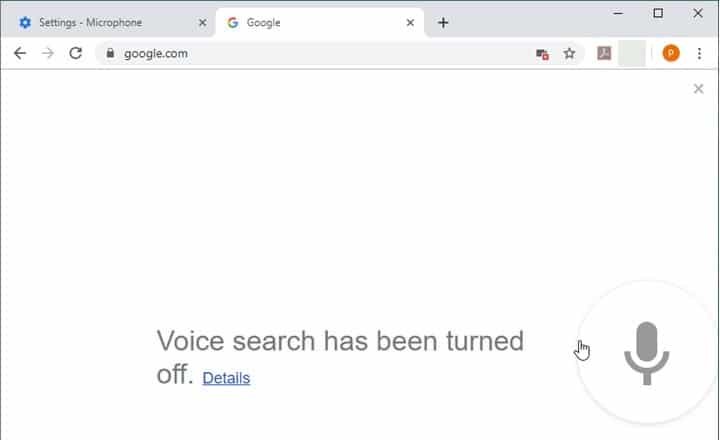

We leverage the “fallback intent”, which is what a voice app defaults to when it cannot assign the user’s most recent spoken command to any other intent and should offer help. The ‘Smart Spies’ hacks combine three building blocks:Ī. Eavesdrop on users after they believe the smart speaker has stopped listening.Request and collect personal data including user passwords.Through the standard development interfaces, SRLabs researchers were able to compromise the data privacy of users in two ways: Security best practice deviations allow abuse of smart speaker development functionality The input slots are converted to text and sent to the application backend, which are often operated outside the control of Amazon or Google. (“Tell me my horoscope for today.”) These set phrases can include variable arguments given by the user as slot values. (“Alexa, turn on My Horoscopes.”) Users can then call functions ( Intents) within the application by speaking specific phrases. Amazon and Google allow third-party developers access to user inputsīoth Alexa Skills and Google Home Actions are activated by the user calling out the invocation name chosen by the application developer. We created voice applications to demonstrate both hacks on both device platforms, turning the assistants into ‘Smart Spies’. The flaws allow a hacker to phish for sensitive information and eavesdrop on users. SRLabs research found two possible hacking scenarios that apply to both Amazon Alexa and Google Home. The apps currently create privacy issues: They can be abused to listen in on users or vish (voice-phish) their passwords.Īs the functionality of smart speakers grows so too does the attack surface for hackers to exploit them. These smart speaker voice apps are called Skills for Alexa and Actions on Google Home. The capability of the speakers can be extended by third-party developers through small apps. Smart speakers from Amazon and Google offer simple access to information through voice commands. UPDATE December 17, 2019: Attacks still possible Six weeks after first publicly discussing the Smart Spies attacks, we performed some retests to see whether Google and Amazon implemented sufficient checks to mitigate the attacks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed